Update your package list to include the contents of the Docker repository:Ĭheck the containerd service has started up:Ĭrvice - containerd container runtime $(lsb_release -cs) stable" | sudo tee /etc/apt//docker.list > /dev/null Now you can add the correct repository for your system by running this command: That's the reason all consitent distributed data-stores requires majority of nodes.$ curl -fsSL | sudo gpg -dearmor -o /etc/apt/keyrings/docker.gpg When the network came back up, you can not decide wich changes are the correct ones.

Both parts of the cluster would assume master and start doing changes. If any part of you cluster, could run on its own, there could be a situation of network partition between those two datacenters so they wouldn't see each other, but otherwise run without any problem. There is no way, to be able to run anyone part without the other, it would create a logical problem.

If you have two failure domains, you will have to pick, which one is the more important one. Kubeadm have a little bit of help here: Long term notes: You cannot simple remove members, because that requires having a quorum, what you shoud be able to do, is create new etcd cluster, using the data from the old. I could not find any good guide how to do that with kubeadm, that's the reason this is not a perfect answers. Restoring etcd quorum is no easy task as it seems, you have to backup data from your node, and than create new etcd cluster.

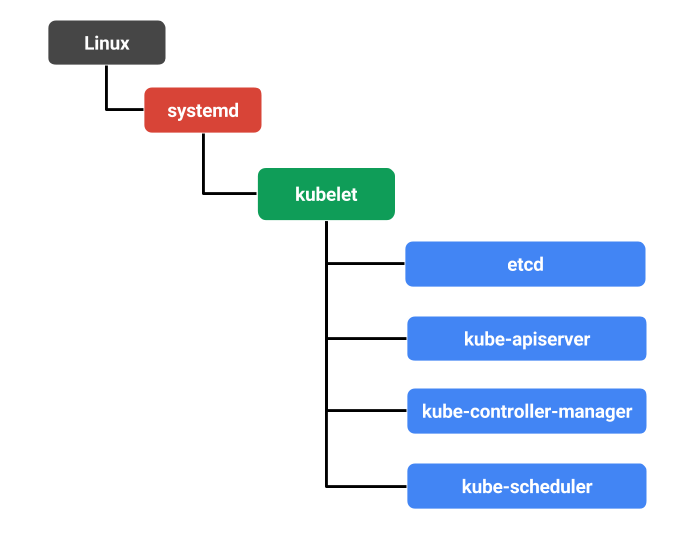

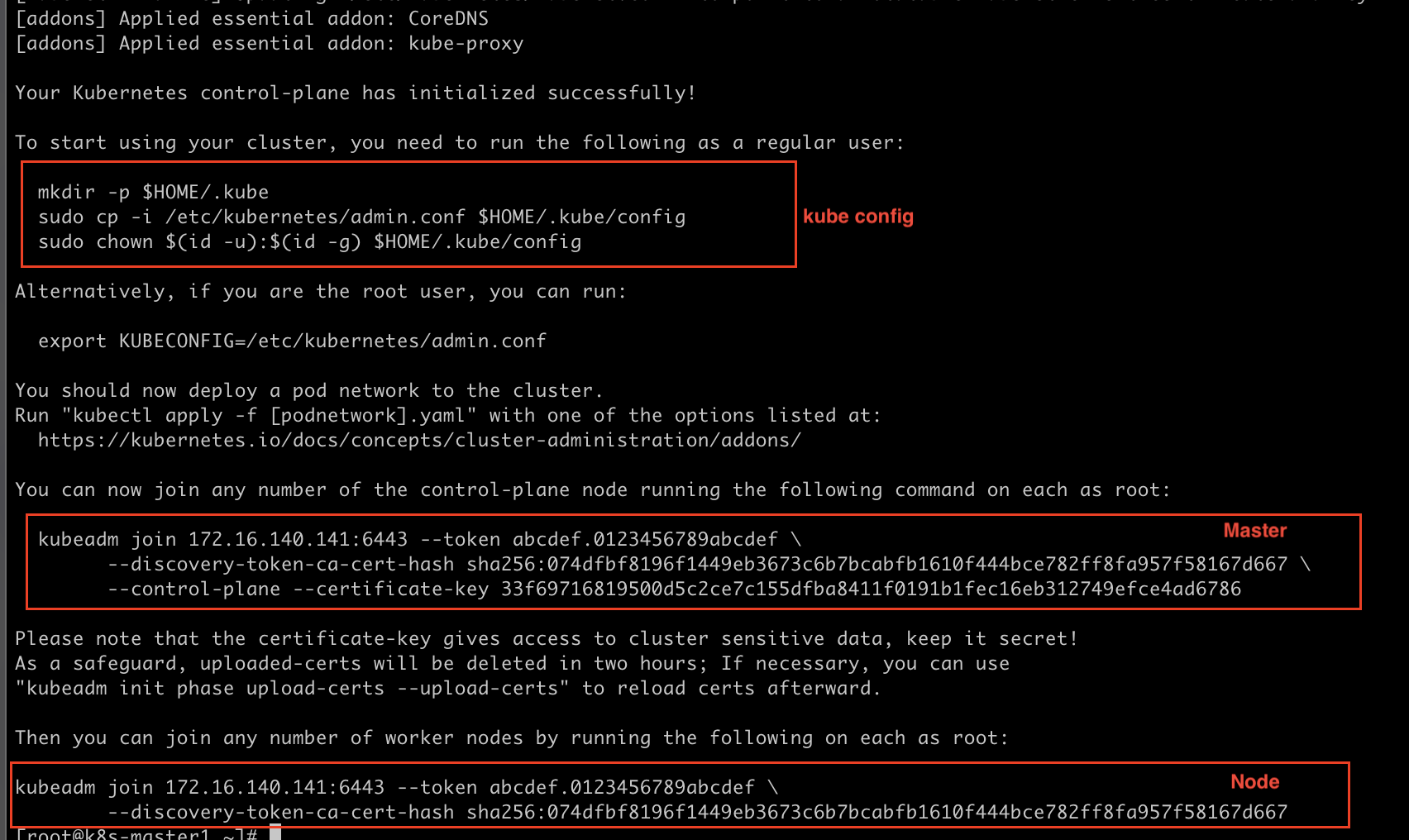

(Or most of k8s distributions, k8s is plugable so you can change it, but kubeadm definetly uses etcd) Solution Hint But an etcd cluster needs a majority of nodes, a quorum, to agree on updates to the cluster state.Įtcd is key-value store, that kubernetes uses as store for all the configs. Kubernetes control plane itself is able to run only with 1/3 nodes online. I suppose you are using stacked masters = etcd members and control plane nodes are co-located. This is not strictly an answer how specifically to solve your problem, but I hope it will somewhat help you anyway. Is there any way to bring back this master into service We tried restarting the available master, but it did not start listening and we were unable to do any actions on the available master. When 2 Masters are down the API server on the third stop listening and the kubectl also stops works, We set up the clusters using kubeadm Stacked control plane approach #sudo kubeadm init -control-plane-endpoint "LOAD_BALANCER_DNS:LOAD_BALANCER_PORT" -upload-certsĪdd two more Master to to the cluster using the below command #sudo kubeadm join 192.168.0.200:6443 -token 9vr73a.a8uxyaju799qwdjv -discovery-token-ca-cert-hash Is there a solution to recover this master node back to the service other than bringing back the other two master online. We choose to have 2 Master and 2 worker nodes in data center1, In a sunny day scenario this worksīut in case of a disaster at data center1, the single master in data center2 becomes unresponsive and does not start any pod in the worker node in data center2 worker nodes and our application service becomes unavailable. We are trying to create k8s cluster environment that spans across 2 data center, We have only 7 BM servers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed